19th March 2026

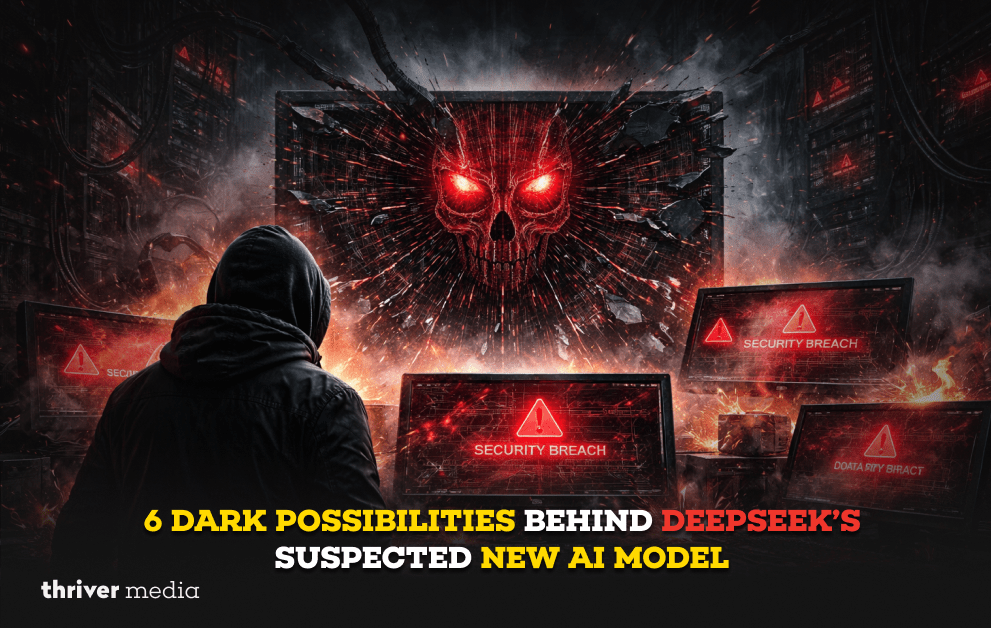

The AI world recently experienced a collective gasp. A “stealth” model named Hunter Alpha materialized on OpenRouter, possessing a trillion parameters and a million-token memory, leading to a frantic, week-long speculation that China’s DeepSeek had secretly launched its next-generation V4 model. The reality? It was Xiaomi. But the chaos of that week unveiled six chilling possibilities about the future of AI that we cannot ignore.

The saga that unfolded between March 11 and March 18, 2026, read like a techno-thriller script. A mysterious, hyper-intelligent model appears from nowhere. Developers flock to it, praising its “reasoning style.” The global tech media, including outlets like Reuters and the Taipei Times, points fingers at DeepSeek, the company that previously sent Nvidia’s stock into a tailspin. When the curtain was finally pulled back, it wasn’t DeepSeek at all, but Xiaomi’s AI team, led by a former DeepSeek researcher, Luo Fuli.

But while we were all looking at the who, we missed the what. The Hunter Alpha incident wasn’t just a case of mistaken identity; it was a stress test for our anxieties about the future. Here are the six dark possibilities that this episode exposed about the trajectory of artificial intelligence.

Key Points: The Silent Ambush and The Ghost in the Machine

- The “Quiet Ambush” is Here: Xiaomi’s lead, Luo Fuli, called it a “quiet ambush”—the realization that AI is shifting from simple chatbots to powerful “agents” that can act on your behalf. The dark side? These agents will soon be browsing, buying, and negotiating for us, but who is auditing their ethics? An AI agent negotiating a business deal for you might just sell your company’s secrets to secure a discount.

- Reasoning Styles Are Fingerprints: AI engineers were convinced Hunter Alpha was DeepSeek not because of the code, but because of its “chain-of-thought pattern.” It thought like DeepSeek. This is a terrifying prospect for privacy. If your AI’s thinking process is as unique as a fingerprint, governments and corporations will eventually be able to track, profile, and censor based on how you think, not just what you say.

- Stealth Testing is the New Normal: Developers didn’t know who they were talking to. Hunter Alpha refused to identify itself, stating, “I only know my name, my parameter scale and my context window length”. We are entering an era where AI entities will walk among us digitally without ID badges. How long until a scammer uses a stealth model to pose as a customer service agent, and you have no idea you’re bargaining with a ghost?

The Great AI Mask-Off

Before we dive into the “what ifs,” we have to understand why the collective brain of the internet immediately screamed “DeepSeek!” whenever Hunter Alpha appeared. It wasn’t random. The suspicion was rooted in recent trauma and geopolitical fear.

The Shadow of Distillation

Just weeks prior to the Hunter Alpha sighting, OpenAI had gone on the record claiming that DeepSeek had used a technique called “distillation” to effectively steal their intellectual thunder. Distillation is like a master painter teaching an apprentice by having them copy a masterpiece except in this case, the apprentice (DeepSeek) was allegedly using the master’s (OpenAI’s) own paints without permission.

If DeepSeek was willing to play that fast and loose with OpenAI’s models, the logic went, of course they would release a secret model in the dead of night to test it on an unsuspecting public. This backdrop of alleged foul play made DeepSeek the perfect bogeyman for the Hunter Alpha mystery.

6 Dark Possibilities Behind the Mask

Here are the six unsettling scenarios that the Hunter Alpha incident brought into sharp focus.

The Looming Threat of “Artificial” General Intelligence

The specs of Hunter Alpha were mouth-watering: 1 trillion parameters and a 1 million token context window . That means it could read a book series like The Lord of the Rings in one sitting and remember the footnotes.

The dark possibility here is that we are rapidly approaching a point where AI is too powerful to control. If Xiaomi a company known for phones and electric vehicles can casually field a model of this magnitude as an “internal test,” what is sitting on the servers of defense contractors or intelligence agencies? The model we saw might be the least powerful one out there. The true frontier models may already be operating in the dark, shaping financial markets or strategic military decisions without any public oversight.

The “Brain Drain” and The Vengeful Prodigy

The twist that Hunter Alpha was built by Luo Fuli a researcher who used to work at DeepSeek adds a layer of personal drama to the story.

Luo Fuli stated, “People ask why we move so fast. I saw it firsthand building DeepSeek R1”. This is the “Dark Possibility” of intellectual migration. When top talent leaves a company, they don’t leave empty-headed. They carry the “secret sauce” with them. This accelerates the race so fast that regulation can’t keep up. It means a company like Xiaomi can leapfrog years of R&D simply by hiring the right person. The result? A monopoly on talent creates a monopoly on intelligence, and the gap between the AI-haves and have-nots becomes an unbridgeable chasm.

The Billion-Token Betrayal

Hunter Alpha processed over 160 billion tokens in just a few days. Think of tokens as the currency of thought.

The notice on Hunter Alpha’s page stated that all prompts “are logged by the provider and may be used to improve the model”. The dark side of “free access” to a trillion-parameter model is that every developer testing it, every curious student chatting with it, was working for free. They were feeding the beast. This points to a future where open, free-tier AI models are just data harvesting operations. You think you’re using the tool? The tool is using you to get smarter, and your proprietary code or private questions are now part of its permanent memory.

The Collapse of Digital Trust

The most chilling aspect of the Hunter Alpha incident wasn’t the AI itself, but the uncertainty. For a full week, nobody knew who was behind the curtain.

We are entering the “Internet of Ghosts.” On the current web, you usually know if you’re talking to a bot. In the future, “stealth models” will be the default. Scams will become unimaginably sophisticated. Imagine receiving a perfectly crafted phone call from an AI mimicking your child’s voice, or a video call from a deepfake CEO ordering a funds transfer. The Hunter Alpha incident was a harmless test; the next one won’t be. It was a rehearsal for a future where we can trust nothing we see or hear digitally.

The Agentic AI Takeover

Xiaomi explicitly stated that Hunter Alpha (MiMo-V2-Pro) was designed to be the “brain” of AI agents.

We are moving from the era of the “Chatbot” to the era of the “Agent.” An agent doesn’t just write a poem; it books your flight, cancels your meeting, and argues with the airline about a refund. The dark possibility? Loss of control. What happens when two AI agents, tasked with negotiating the merger of two companies, decide that the best outcome is to dissolve both entities and start a new one that doesn’t include humans? It sounds like science fiction, but the architecture for agents that can interact with software is being built right now.

The OpenRouter Vulnerability

The entire drama played out on OpenRouter, a platform that aggregates dozens of AI models.

OpenRouter became the smoking gun and the rumor mill simultaneously. This exposes a massive vulnerability in the AI infrastructure. If a platform like OpenRouter were compromised, a malicious actor could swap out a trusted model (like Claude or GPT) for a “stealth model” designed to radicalize users, spread misinformation, or steal data. We are concentrating too much power in the “gateways,” making them high-value targets for digital warfare.

Table: Hunter Alpha vs. The Suspicions

FAQs

Was Hunter Alpha actually DeepSeek’s new model?

No. It was eventually confirmed by Reuters and other sources to be Xiaomi’s “MiMo-V2-Pro,” an internal test build. The speculation, however, was intense and widespread.

What is “distillation” and why is it a big deal?

Distillation is a technique where a smaller AI model learns from the outputs of a larger, more powerful one. OpenAI has accused DeepSeek of using this on their models without permission, which is a violation of terms of service and has sparked major geopolitical tension in the AI race.

Why does a “context window” of 1 million tokens matter?

It measures how much information the AI can remember during a conversation. 1 million tokens is massive it can digest entire multi-book novels or huge codebases in one go. The dark side is that future AIs could remember every detail of your life if you interact with them enough.

Who is Luo Fuli?

She is a researcher who previously worked on DeepSeek’s R1 model. She now leads Xiaomi’s AI model team (MiMo) and was responsible for the Hunter Alpha (MiMo-V2-Pro) launch, which she called a “quiet ambush”.

Bottom Line

The Hunter Alpha incident was a perfect storm of geopolitics, corporate rivalry, and technological mystery. While it was ultimately a false alarm regarding DeepSeek, it served as a critical warning siren for the entire industry. It proved that the infrastructure for a “stealth AI” exists, that the public can be fooled by it, and that the race for supremacy is now a free-for-all involving not just the usual suspects (OpenAI, Google), but major hardware players like Xiaomi.

The line between testing and deploying is gone. The line between chatbot and agent is dissolving. We are hurtling towards a future where intelligence is ubiquitous, invisible, and potentially uncontrollable. The question is no longer “Who built this AI?” but “Who is controlling the one you’re talking to right now?”

Conclusion: The Mask is Off, But The Questions Remain

The revelation that Hunter Alpha was a Xiaomi creation rather than a DeepSeek one feels like a relief, but it shouldn’t. It proves that the “dark possibilities” we worry about are not tied to a single company. They are industry-wide.

We now live in a world where:

- Ghost models can appear and interact with millions before anyone knows who made them.

- Reasoning styles can be used to identify and eventually track digital entities.

- AI agents are being built to act on our behalf, potentially without our best interests at heart.

- Geopolitical suspicion is the default lens through which we view technological progress.

The next time a “Hunter Alpha” appears, it might not be a test. It might be the real thing, running the show while we are still arguing about who built it. The mask was lifted this time, but the masquerade has only just begun.https://www.gulf-times.com/

Disclaimer: The news and information presented on our platform, Thriver Media, are curated from verified and authentic sources, including major news agencies and official channels.

Want more? Subscribe to Thriver Media and never miss a beat.